Your POS system shouldn’t be an island. In today’s retail environment, your point-of-sale platform needs …

Solutions Data Integration

What is Data Integration?

Data integration combines data from disparate sources using various access methods to provide a unified view of data. A complete data integration solution delivers data from trusted sources in a timely manner that has been cleansed and transformed into meaningful and valuable information. Methods of data integration can include accessing databases (JDBC, ODBC), parsing formatted files (fixed width, delimited, CSV, XML), extracting archives (ZIP, JAR, TAR), retrieval from file transfer (FTP, SCP), messaging (JMS, AMQP), and consuming web services (SOAP, REST). A data integration strategy commonly uses one or more of the following practices:

- Extract, Transform, Load (ETL) – extract from source, transform into structure, and load into target.

- Enterprise Information Integration (EII) – unified view of data and information for an entire organization.

- Enterprise Data Replication (EDR) – synchronization from real-time processing of captured data changes.

- Enterprise Application Integration (EAI) – middleware that enables integration of systems and applications.

Master Data Management

Master data management (MDM) is a system used by an organization to link all of its critical data to a single source, called a master file, that provides a common point of reference. MDM maintains a consistent view of core business entities, which consists of data spread across a range of application systems. The categories of data managed by MDM varies by industry and organization, but typically includes customers, suppliers, products, employees, and finances. When MDM is used to handle customer data, it is called customer data integration (CDI). Techniques for implementing a MDM system include data consolidation to a single data store, data federation for a single virtual view of data, and propagation from the master file to other systems using canonical data exchange formats.

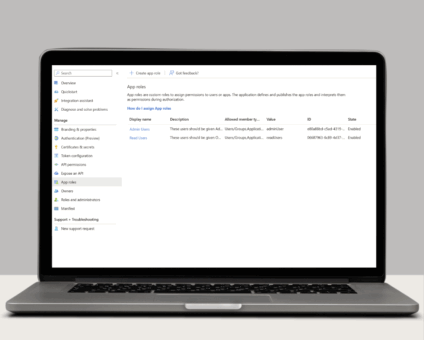

Application Integration

Enterprise applications are driven by data that must be sourced from systems spread across the enterprise. Application integration is the system of sourcing and combining data into a single application database to support the operation of that application. For example, a retail point of sale system needs item and prices from the merchandising system and sales tax rates from the tax system. Using a structured framework or middleware for application integration can reduce dependencies, complexity, and impromptu point-to-point connections between systems in the enterprise.

Data Warehouse, Data Hub, Data Lake

An enterprise data warehouse is a system used for business intelligence, including reporting and data analysis. They are central repositories of integrated data from disparate sources of data, storing current and historical data from multiple areas of the business. The main source of the data is cleaned, transformed, cataloged, and made available for data mining, online analytical processing, market research, and decision support. A simpler form of the data warehouse is the data mart, which is focused on a single subject area, such as sales, marketing, or finance. A less formal version of the data warehouse is a data lake, which contains all organizational data in raw form without any transformation.

Data Migration

Data migration is a process of transferring from one storage type to another, which is commonly used during server or storage replacements, upgrades, application migration, and data center relocation. Data from the old system is mapped to the new one using a programmatic approach that extracts, transforms, and loads data. Automated data cleaning is often performed as part of the migration to improve data quality by de-duping data, removing obsolete data, and re-formatting data. After loading the new system, the results are verified to determine that data was translated accurately. Both systems may run in parallel for a period of time to ease the transition and identify areas of disparity.